diff --git a/model-evaluation.ipynb b/model-evaluation.ipynb

new file mode 100644

index 0000000000000000000000000000000000000000..eb6006a99dc0f7f888001f96d5f9477c93a1c4c8

--- /dev/null

+++ b/model-evaluation.ipynb

@@ -0,0 +1,432 @@

+{

+ "cells": [

+ {

+ "cell_type": "markdown",

+ "id": "c055d3c2-a5cb-41f5-8f22-fee73f12c96f",

+ "metadata": {},

+ "source": [

+ "# Model Evaluation\n",

+ "\n",

+ "In this lesson, we'll learn how to evaluate the quality of a machine learning model. By the end of this lesson, students will be able to:\n",

+ "\n",

+ "- Apply `get_dummies` to represent categorical features as one or more dummy variables.\n",

+ "- Apply `train_test_split` to randomly split a dataset into a training set and test set.\n",

+ "- Evaluate machine learning models in terms of overfit and underfit."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "2d4ceb33-7b23-4e11-ac26-0fb51e386bc0",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "from sklearn.linear_model import LinearRegression\n",

+ "from sklearn.metrics import mean_squared_error\n",

+ "\n",

+ "import pandas as pd"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "2a116488-57f1-4b0c-bb8c-312663260de6",

+ "metadata": {},

+ "source": [

+ "## Dummy variables\n",

+ "\n",

+ "Last time, we tried to predict the price of a home from all the other variables. However, we learned that since the `city` column contains *categorical* string values, we can't use it as a feature in a regression algorithm."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "343f4bfc-b744-4f03-a918-7aacd503d5df",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "homes = pd.read_csv(\"homes.csv\")\n",

+ "homes"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "6e85a5ca-c3fc-4d9c-877d-21e2b54681eb",

+ "metadata": {},

+ "source": [

+ "This problem not only occurs when using `DecisionTreeRegressor`, but it also occurs when using `LinearRegression` since we can't multiply a *categorical* string value with a *numeric* slope value. Let's learn how to represent these categorical features as one or more dummy variables."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "dccf776c-c179-4369-a5ea-c77d61aa3234",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "def model_parameters(reg, columns):\n",

+ " \"\"\"Returns a string with the linear regression model parameters for the given column names.\"\"\"\n",

+ " slopes = [f\"{coef:.2f}({columns[i]})\" for i, coef in enumerate(reg.coef_)]\n",

+ " return \" + \".join([f\"{reg.intercept_:.2f}\"] + slopes)"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "8c204c21-06b2-4383-87bd-da14437994ad",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "X = homes.drop(\"price\", axis=1)\n",

+ "y = homes[\"price\"]\n",

+ "reg = LinearRegression().fit(X, y)\n",

+ "\n",

+ "print(\"Model:\", model_parameters(reg, X.columns))\n",

+ "print(\"Error:\", mean_squared_error(y, reg.predict(X)))"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "23fa4a7d-d1bf-4835-bea0-b047868d2307",

+ "metadata": {},

+ "source": [

+ "## Overfitting\n",

+ "\n",

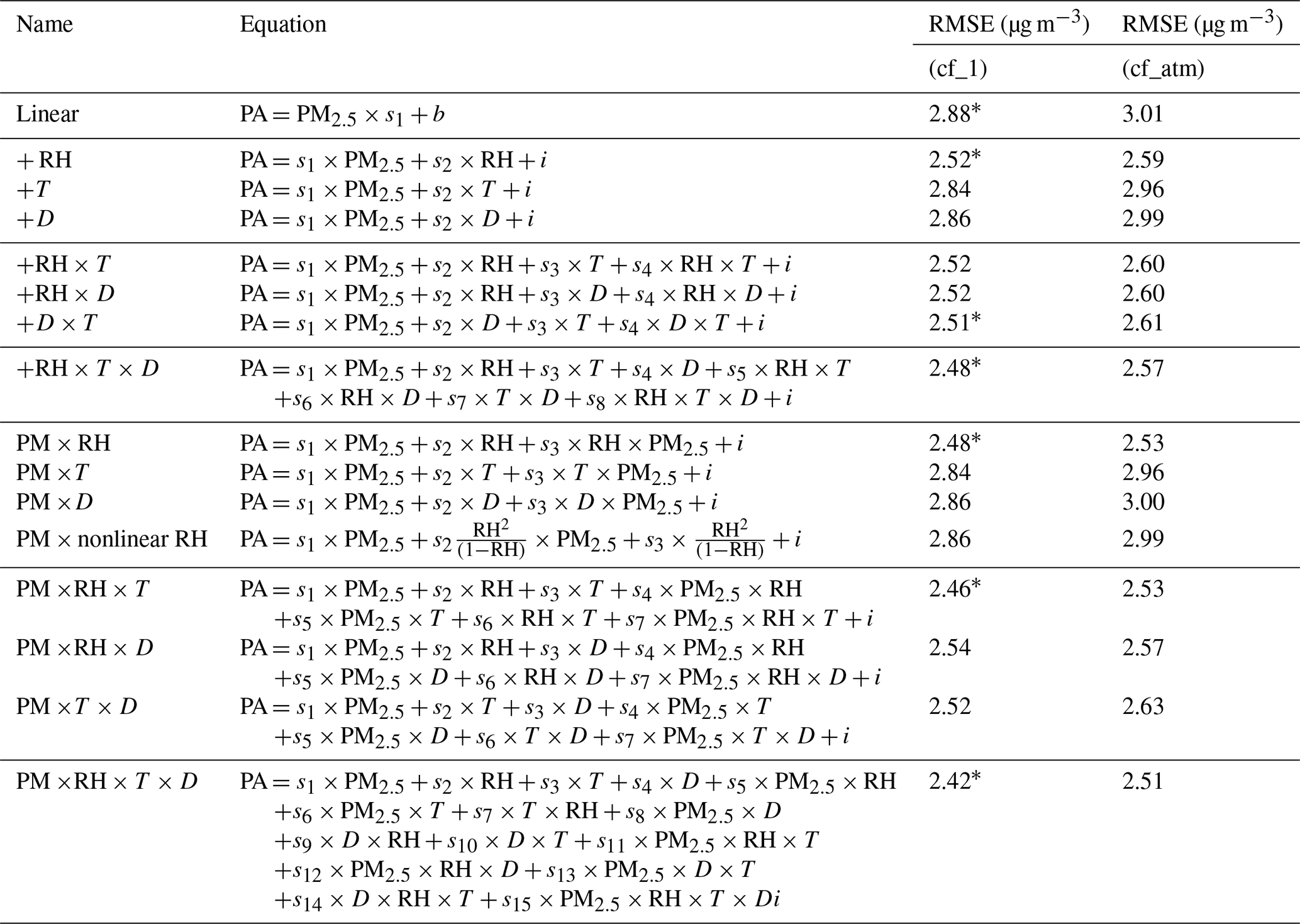

+ "In our introduction to machine learning, we explained that the researchers who worked on calibrating the PurpleAir Sensor (PAS) measurements against the EPA Air Quality Sensor (AQS) measurements ultimately decided to use the model that included only the PAS and humidity features (variables)—ignoring the opportunity to use the temperature and dew point even though a model that includes all features produced a lower overall mean squared error."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "053df79d-5341-4dee-a1f2-fdfe2c0164e7",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "sensor_data = pd.read_csv(\"sensor_data.csv\")\n",

+ "sensor_data"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "6f0db273-07a5-4921-a971-191f1752b275",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "X = sensor_data.drop(\"AQS\", axis=1)\n",

+ "y = sensor_data[\"AQS\"]\n",

+ "reg = LinearRegression().fit(X, y)\n",

+ "\n",

+ "print(\"Model:\", model_parameters(reg, X.columns))\n",

+ "# squared=False for RMSE: root mean squared error\n",

+ "print(\"Error:\", mean_squared_error(y, reg.predict(X), squared=False))"

+ ]

+ },

+ {

+ "attachments": {},

+ "cell_type": "markdown",

+ "id": "e41ef189-bd43-4cfb-88a2-ef439f9f7416",

+ "metadata": {},

+ "source": [

+ "In fact, the authors ([Barkjohn et al. 2021](https://doi.org/10.5194/amt-14-4617-2021\n",

+ ")) tested the impact of incrementally introducing a feature to the model to determine which combination of features could provide the most useful model—not necessarily the most accurate one. In their research, they aimed to predict the PAS measurement from the AQS measurement, the opposite of our task, and they included interactions between features indicated by the × symbol. For each grouping of models with a certain number of features, they highlighted the model with the lowest **root mean squared error** (RMSE, or the square root of the MSE) using an asterisk `*`.\n",

+ "\n",

+ "\n",

+ "\n",

+ "The model at the bottom of the table that includes all the features also has the lowest RMSE loss. But the loss value alone is not a convincing measure: adding more features into a model not only leads to a model that is harder to explain, but also increases the possibility of overfitting.\n",

+ "\n",

+ "A model is considered **overfit** if its predictions correspond too closely to the *training dataset* such that improvements reported on the training dataset are not reflected when the model is run on a *testing dataset* (or in the real world). To simulate training and testing datasets, we can take our `X` features and `y` labels and subdivide them into `X_train, X_test, y_train, y_test` using the `train_test_split` function. **The testing dataset should only be used during final model evaluation when we're ready to report the overall effectiveness of our final machine learning model.**"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "41003aba-b994-4138-98ff-d245f365680b",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "from sklearn.model_selection import train_test_split\n",

+ "\n",

+ "# test_size=0.2 indicates 80% training dataset and 20% testing dataset\n",

+ "X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2)\n",

+ "\n",

+ "# During model training, use only the training dataset\n",

+ "reg = LinearRegression().fit(X_train, y_train)\n",

+ "\n",

+ "print(\"Model:\", model_parameters(reg, X_train.columns))\n",

+ "# During model evaluation, use the testing dataset\n",

+ "print(\"Error:\", mean_squared_error(y_test, reg.predict(X_test), squared=False))"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "44b8bc22-2491-4dbf-b089-e4b8d5d3640c",

+ "metadata": {},

+ "source": [

+ "## Feature selection\n",

+ "\n",

+ "**Feature selection** describes the process of selecting only a subset of features in order to improve the quality of a machine learning model. In the air quality sensor calibration study, we can begin with all the features and gradually remove the least-important features one-by-one."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "73706461-c23c-4b52-b212-b52d3f2512f5",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "features = [\"PAS\", \"humidity\", \"temp\", \"dew\"]\n",

+ "\n",

+ "reg = LinearRegression().fit(X_train.loc[:, features], y_train)\n",

+ "print(\"Model:\", model_parameters(reg, features))\n",

+ "print(\"Error:\", mean_squared_error(y_test, reg.predict(X_test.loc[:, features]), squared=False))"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "12608799-a3f3-487d-b95b-c2e12c049343",

+ "metadata": {},

+ "source": [

+ "[Recursive feature elimination](https://scikit-learn.org/stable/modules/generated/sklearn.feature_selection.RFE.html) (RFE) automates this process by starting with a model that includes all the variables and removes features from the model starting with the ones that contribute the least weight (smallest coefficients in a linear regression)."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "1333edc7-cb42-4ede-8d5e-0b354e6f67ab",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "from sklearn.feature_selection import RFE\n",

+ "\n",

+ "# Remove 1 feature per step until half the original features remain\n",

+ "rfe = RFE(LinearRegression(), step=1, n_features_to_select=0.5, verbose=1)\n",

+ "rfe.fit(X_train, y_train)\n",

+ "\n",

+ "# Show the final subset of features\n",

+ "rfe_features = X.columns[[r == 1 for r in rfe.ranking_]]\n",

+ "print(\"Features:\", list(rfe_features))\n",

+ "\n",

+ "# Extract the last LinearRegression model trained on the final subset of features\n",

+ "reg = rfe.estimator_\n",

+ "\n",

+ "print(\"Model:\", model_parameters(reg, rfe_features))\n",

+ "print(\"Error:\", mean_squared_error(y_test, rfe.predict(X_test), squared=False))"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "f6348469-619c-448d-9850-dc05815477c5",

+ "metadata": {},

+ "source": [

+ "## Cross validation\n",

+ "\n",

+ "How do we know when to stop removing features during feature selection? We can certainly use intuition or look at the changes in error as we remove each feature. But this still requires us to evaluate the model somehow. If the testing dataset can only be used at the end of model evaluation, **it was wrong of us to use the testing dataset during feature selection**!\n",

+ "\n",

+ "Cross-validation provides one way to help us explore different models before we choose a final model for evaluation at the end. [**Cross-validation**](https://scikit-learn.org/stable/modules/cross_validation.html) lets us evaluate models without touching the testing dataset by introducing new *validation datasets*.\n",

+ "\n",

+ "The simplest way to cross-validate is to call the `cross_val_score` helper function on an unfitted machine learning algorithm and the dataset. This function will further subdivide the training dataset into 5 folds and, for each of the 5 folds, train a separate model on the training folds and evaluate them on the validation fold.\n",

+ "\n",

+ "\n",

+ "\n",

+ "The `scoring` parameter can accept the string name of [a scorer function](https://scikit-learn.org/stable/modules/model_evaluation.html#scoring-parameter). Higher (more positive) scores are considered better, so we use the negative RMSE value as the scorer function."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "9ea0a51b-366a-4c2c-9035-556dc918e3f9",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "from sklearn.model_selection import cross_val_score\n",

+ "from sklearn.tree import DecisionTreeRegressor\n",

+ "\n",

+ "cross_val_score(\n",

+ " estimator=DecisionTreeRegressor(max_depth=2),\n",

+ " X=X_train,\n",

+ " y=y_train,\n",

+ " scoring=\"neg_root_mean_squared_error\",\n",

+ " verbose=3,\n",

+ ")"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "529a1ca2-d496-4d85-895a-7cdef97e77f7",

+ "metadata": {},

+ "source": [

+ "As we've seen throughout these lessons on machine learning, we prefer to automate our processes. Rather than modify the `max_depth` and manually tweaking the values until we find something that works, we can use `GridSearchCV` to exhaustively search all hyperparameter options. Here, the first 5 folds for `max_depth=2` are exactly the same as `cross_val_score`."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "3de69e19-cbbe-4902-85df-1ee0d37c3be5",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "from sklearn.model_selection import GridSearchCV\n",

+ "\n",

+ "search = GridSearchCV(\n",

+ " estimator=DecisionTreeRegressor(),\n",

+ " param_grid={\"max_depth\": [2, 3, 4, 5, 6, 7, 8, 9, 10]},\n",

+ " scoring=\"neg_root_mean_squared_error\",\n",

+ " verbose=3,\n",

+ ")\n",

+ "search.fit(X_train, y_train)\n",

+ "\n",

+ "# Show the best score and best estimator at the end of hyperparameter search\n",

+ "print(\"Mean score for best model:\", search.best_score_)\n",

+ "reg = search.best_estimator_\n",

+ "print(\"Best model:\", reg)"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "de94f45e-2427-488d-b63c-82be388e7f23",

+ "metadata": {},

+ "source": [

+ "Finally, we can report the test error for the best model by evaluating it against the testing dataset. Why is the testing dataset error different from the mean score for the best model printed above?"

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "8c93d46d-cb6a-49a5-8876-0c695c376668",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "print(\"Error:\", mean_squared_error(y_test, reg.predict(X_test), squared=False))"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "a8c39ec2-4a43-44be-bf91-c11d38f79c83",

+ "metadata": {},

+ "source": [

+ "## Visualizing decision tree models\n",

+ "\n",

+ "Last time, we plotted the predictions for a linear regression model that was trained to take PAS measurements and predict AQS measurements. What do you think a decision tree model would look like for this simplified PurpleAir sensor calibration problem?\n",

+ "\n",

+ "Here's a complete workflow for decision tree model evaluation using the practices above. The resulting plot compares a linear regression model `lmplot` against the decisions made by a decision tree."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "3198171d-7a24-4119-82dc-a42f0f52f25d",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "# Split dataset into 80% training dataset and 20% testing dataset\n",

+ "X = sensor_data.drop(\"AQS\", axis=1)\n",

+ "y = sensor_data[\"AQS\"]\n",

+ "X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2)\n",

+ "\n",

+ "# Recursive feature elimination to select the single most important feature based on slope value\n",

+ "rfe = RFE(LinearRegression(), n_features_to_select=1, verbose=1)\n",

+ "rfe.fit(X_train, y_train)\n",

+ "# Print the best feature to predict AQS\n",

+ "rfe_feature = X.columns[rfe.ranking_.argmin()]\n",

+ "print(\"Best feature to predict AQS:\", rfe_feature)\n",

+ "# Use only the best feature\n",

+ "X = X[[rfe_feature]]\n",

+ "X_train = X_train[[rfe_feature]]\n",

+ "X_test = X_test[[rfe_feature]]\n",

+ "\n",

+ "# Grid search cross-validation to tune the max_depth hyperparameter using RMSE loss metric\n",

+ "search = GridSearchCV(\n",

+ " estimator=DecisionTreeRegressor(),\n",

+ " param_grid={\"max_depth\": [2, 3, 4, 5, 6, 7, 8, 9, 10]},\n",

+ " scoring=\"neg_root_mean_squared_error\",\n",

+ " verbose=3,\n",

+ ")\n",

+ "search.fit(X_train, y_train)\n",

+ "# Print the best score and best estimator at the end of hyperparameter search\n",

+ "print(\"Mean score for best model:\", search.best_score_)\n",

+ "reg = search.best_estimator_\n",

+ "print(\"Best model:\", reg)\n",

+ "\n",

+ "# During model evaluation, use the testing dataset\n",

+ "print(\"Test error:\", mean_squared_error(y_test, search.predict(X_test), squared=False))\n",

+ "\n",

+ "# Visualize the tree decisions compared to a LinearRegression model (lmplot)\n",

+ "import matplotlib.pyplot as plt\n",

+ "import numpy as np\n",

+ "import seaborn as sns\n",

+ "from sklearn.tree import plot_tree\n",

+ "sns.set_theme()\n",

+ "grid = sns.lmplot(sensor_data, x=rfe_feature, y=\"AQS\")\n",

+ "# Create a demonstration dataset that counts from 0 to the max PAS value\n",

+ "X_demo = pd.DataFrame(np.arange(X[rfe_feature].max()), columns=[rfe_feature])\n",

+ "grid.facet_axis(0, 0).plot(X_demo, reg.predict(X_demo), c=\"orange\")\n",

+ "grid.set(title=f\"lmplot vs {reg}\")\n",

+ "# Show nodes in the decision tree\n",

+ "plt.figure(dpi=300)\n",

+ "plot_tree(\n",

+ " reg,\n",

+ " max_depth=2, # Only show the first two levels\n",

+ " linewidth=3,\n",

+ " feature_names=[rfe_feature],\n",

+ " label=\"root\",\n",

+ " filled=True,\n",

+ " impurity=False,\n",

+ " proportion=True,\n",

+ " rounded=False\n",

+ ") and None # Hide return value of plot_tree"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "4ab80bc3-acd4-472a-a537-ea2d2ff5039b",

+ "metadata": {},

+ "source": [

+ "The testing dataset error rates for both the `DecisionTreeRegressor` and the `LinearRegression` models are not too far apart. Which model would you prefer to use? Justify your answer using either the error rates below or the visualizations above."

+ ]

+ },

+ {

+ "cell_type": "code",

+ "execution_count": null,

+ "id": "5884ec8b-1ae1-485f-9736-f035e1ec7ca3",

+ "metadata": {},

+ "outputs": [],

+ "source": [

+ "print(\"Tree error:\", mean_squared_error(y_test, search.predict(X_test), squared=False))\n",

+ "print(\"Line error:\", mean_squared_error(\n",

+ " y_test,\n",

+ " LinearRegression().fit(X_train, y_train).predict(X_test),\n",

+ " squared=False\n",

+ "))"

+ ]

+ },

+ {

+ "cell_type": "markdown",

+ "id": "623aa026-c92a-48a3-8cac-ca90e53222b6",

+ "metadata": {},

+ "source": [

+ "Earlier, we discussed how overfitting to the testing dataset can be mitigated by using cross-validation. But in answering the previous question on whether we should prefer a `DecisionTreeRegressor` model or a `LinearRegression` model, we've actually overfit the model by hand! Explain how preferring one model over the other according to the visualization overfits to the testing dataset."

+ ]

+ }

+ ],

+ "metadata": {

+ "kernelspec": {

+ "display_name": "Python 3 (ipykernel)",

+ "language": "python",

+ "name": "python3"

+ },

+ "language_info": {

+ "codemirror_mode": {

+ "name": "ipython",

+ "version": 3

+ },

+ "file_extension": ".py",

+ "mimetype": "text/x-python",

+ "name": "python",

+ "nbconvert_exporter": "python",

+ "pygments_lexer": "ipython3",

+ "version": "3.10.13"

+ }

+ },

+ "nbformat": 4,

+ "nbformat_minor": 5

+}